Mubert

audio

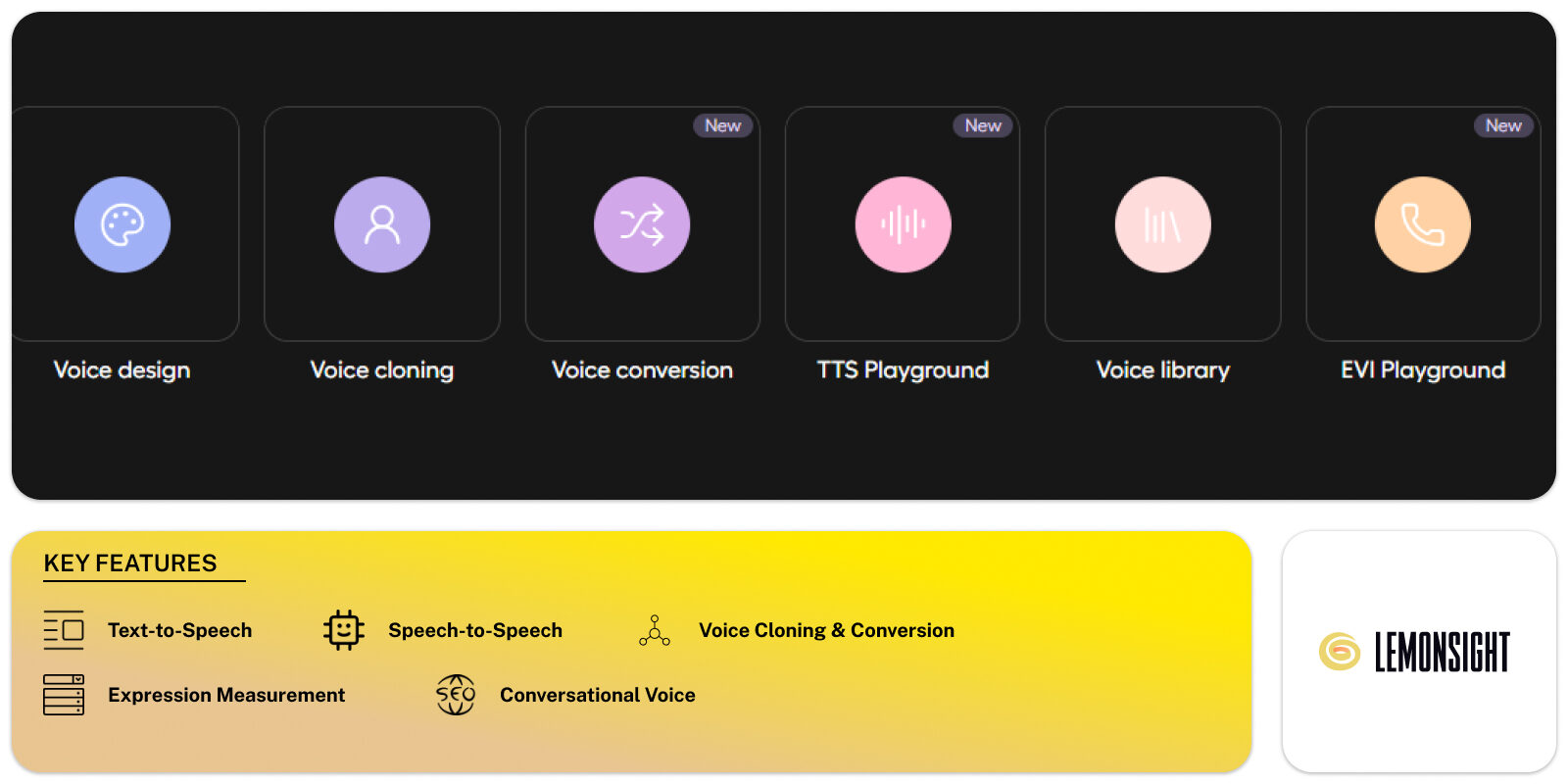

Hume AI is an empathic voice AI platform that generates emotionally expressive speech for creators, developers, and enterprises. The platform produces studio-quality text-to-speech and speech-to-speech outputs through purpose-built voice models and a speech-language architecture. Hume AI targets content creators, game developers, contact-center teams, and product teams that require low-latency, multi-language voice generation. Primary modules include Octave (Text-to-Speech), EVI (Empathic Voice Interface speech-to-speech), Expression Measurement, Conversational Voice, and a Creator Studio for UI-based voice design. The platform exposes SDKs and APIs for Python, TypeScript, Swift, React, and .NET to connect voice workflows to web, mobile, and backend systems. Hume AI documents language coverage, latency figures, and sample audio for evaluation.

Hume AI processes text or incoming audio to produce expressive speech with controlled prosody and emotion markers. The Text-to-Speech flow (Octave) accepts text plus acting or emotion instructions, converts text into a prosody-aware representation, then renders audio in the selected voice. The Speech-to-Speech flow (EVI) ingests user audio, measures vocal features, applies a speech-language model, then generates a response with matched cadence and emotion. Expression Measurement analyzes audio and video to output vocal and facial expression metrics as timestamps or stream events. Request patterns use REST/HTTP endpoints with SDK wrappers for low-latency RPC and concurrent connections. The platform supports external LLMs for hybrid pipelines and provides voice cloning and voice conversion options.

| Plan | Monthly (USD) | Annual (USD) |

|---|---|---|

| Free | $0 / month | Contact sales for annual options |

| Starter | $3 / month | Contact sales for annual options |

| Creator | $7 / month | Contact sales for annual options |

| Pro | $70 / month | Contact sales for annual options |

| Scale | $200 / month | Contact sales for annual options |

| Business | $500 / month | Contact sales for annual options |

| Enterprise | Custom | Custom (contact sales) |